Prevent costly SEO mistakes with automated robots.txt Monitoring

One line in a robots.txt file can take your entire site out of Google overnight. A staging config pushed to production, a well-meaning dev blocking a directory, an updated CMS template – it happens more often than SEOs like to admit, and there’s usually nothing to catch it.

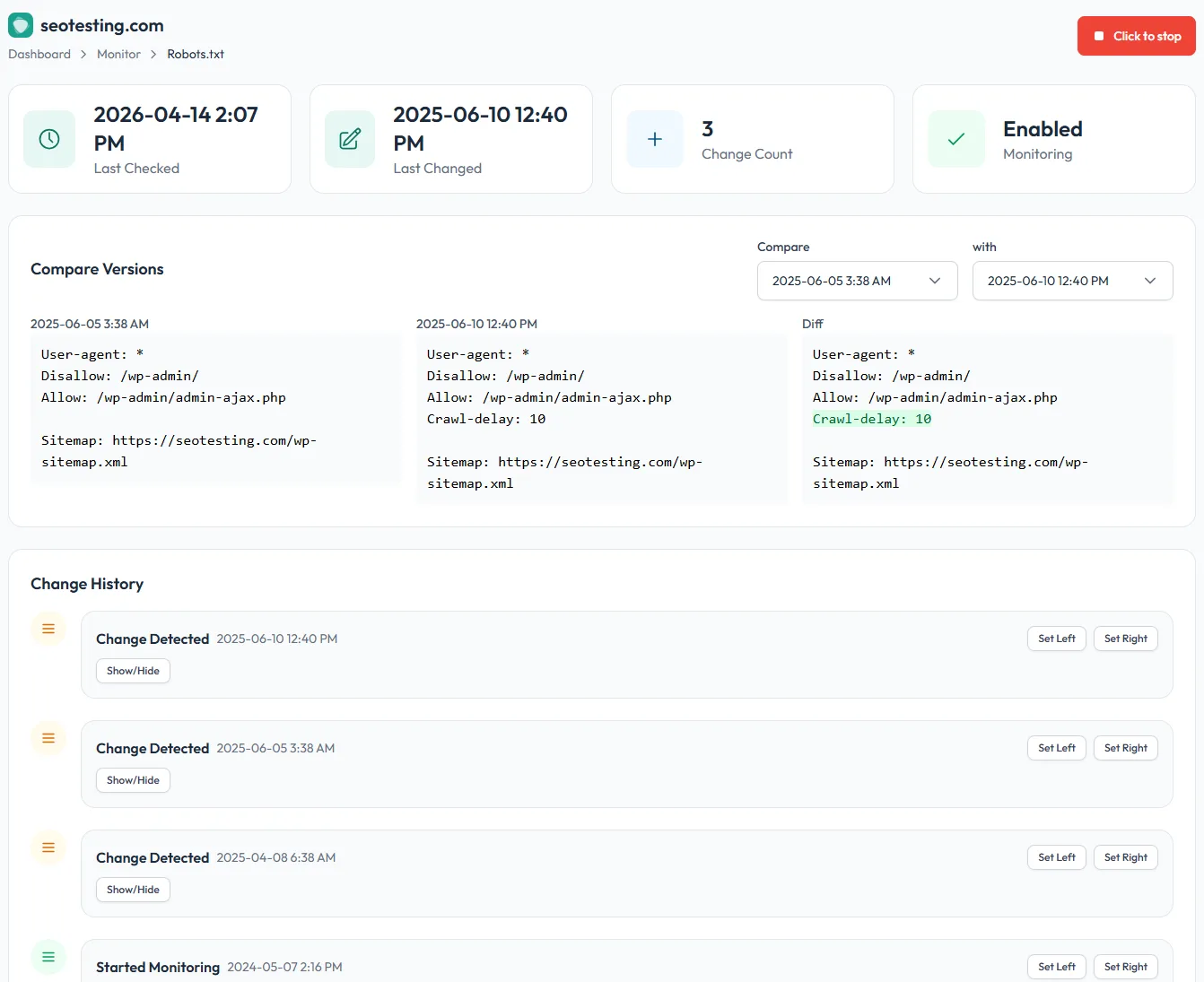

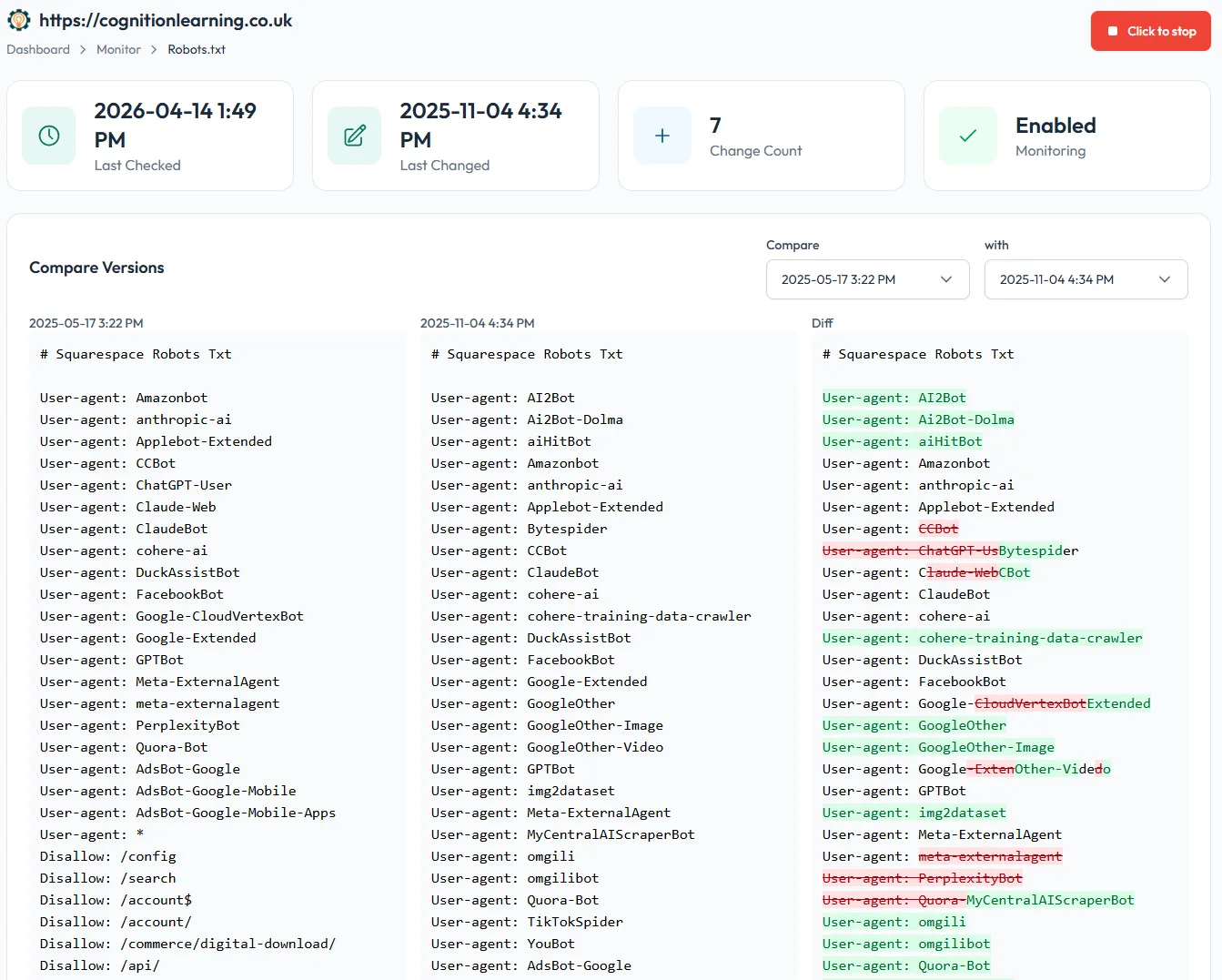

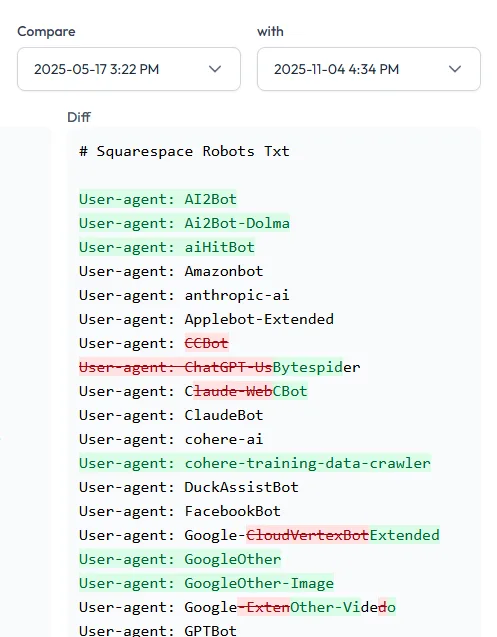

SEOTesting’s robots.txt Monitor quietly watches your file every 3 hours, stores every version, and alerts you the moment anything changes. You get the answer to “what changed, and when?” before the ranking drop turns up in Search Console a week later.